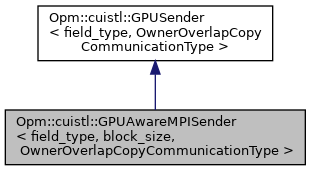

Derived class of GPUSender that handles MPI made with CUDA aware MPI The copOwnerToAll function uses MPI calls refering to data that resides on the GPU in order to send it directly to other GPUs, skipping the staging step on the CPU.

More...

#include <CuOwnerOverlapCopy.hpp>

|

| using | X = CuVector< field_type > |

| |

|

| | GPUAwareMPISender (const OwnerOverlapCopyCommunicationType &cpuOwnerOverlapCopy) |

| |

| void | copyOwnerToAll (const X &source, X &dest) const override |

| | copyOwnerToAll will copy source to the CPU, then call OwnerOverlapCopyCommunicationType::copyOwnerToAll on the copied data, and copy the result back to the GPU More...

|

| |

| void | project (X &x) const |

| | project will project x to the owned subspace More...

|

| |

| void | dot (const X &x, const X &y, field_type &output) const |

| | dot will carry out the dot product between x and y on the owned indices, then sum up the result across MPI processes. More...

|

| |

| field_type | norm (const X &x) const |

| | norm computes the l^2-norm of x across processes. More...

|

| |

template<class field_type, int block_size, class OwnerOverlapCopyCommunicationType>

class Opm::cuistl::GPUAwareMPISender< field_type, block_size, OwnerOverlapCopyCommunicationType > Derived class of GPUSender that handles MPI made with CUDA aware MPI The copOwnerToAll function uses MPI calls refering to data that resides on the GPU in order to send it directly to other GPUs, skipping the staging step on the CPU.

- Template Parameters

-

| field_type | is float or double |

| block_size | is the blocksize of the blockelements in the matrix |

| OwnerOverlapCopyCommunicationType | is typically a Dune::LinearOperator::communication_type |

template<class field_type , int block_size, class OwnerOverlapCopyCommunicationType >

◆ GPUAwareMPISender()

template<class field_type , int block_size, class OwnerOverlapCopyCommunicationType >

| Opm::cuistl::GPUAwareMPISender< field_type, block_size, OwnerOverlapCopyCommunicationType >::GPUAwareMPISender |

( |

const OwnerOverlapCopyCommunicationType & |

cpuOwnerOverlapCopy | ) |

|

|

inline |

◆ copyOwnerToAll()

template<class field_type , int block_size, class OwnerOverlapCopyCommunicationType >

◆ dot()

template<class field_type , class OwnerOverlapCopyCommunicationType >

| void Opm::cuistl::GPUSender< field_type, OwnerOverlapCopyCommunicationType >::dot |

( |

const X & |

x, |

|

|

const X & |

y, |

|

|

field_type & |

output |

|

) |

| const |

|

inlineinherited |

◆ norm()

template<class field_type , class OwnerOverlapCopyCommunicationType >

◆ project()

template<class field_type , class OwnerOverlapCopyCommunicationType >

◆ m_cpuOwnerOverlapCopy

template<class field_type , class OwnerOverlapCopyCommunicationType >

| const OwnerOverlapCopyCommunicationType& Opm::cuistl::GPUSender< field_type, OwnerOverlapCopyCommunicationType >::m_cpuOwnerOverlapCopy |

|

protectedinherited |

◆ m_indicesCopy

template<class field_type , class OwnerOverlapCopyCommunicationType >

| std::unique_ptr<CuVector<int> > Opm::cuistl::GPUSender< field_type, OwnerOverlapCopyCommunicationType >::m_indicesCopy |

|

mutableprotectedinherited |

◆ m_indicesOwner

template<class field_type , class OwnerOverlapCopyCommunicationType >

| std::unique_ptr<CuVector<int> > Opm::cuistl::GPUSender< field_type, OwnerOverlapCopyCommunicationType >::m_indicesOwner |

|

mutableprotectedinherited |

◆ m_initializedIndices

template<class field_type , class OwnerOverlapCopyCommunicationType >

|

|

mutableprotectedinherited |

The documentation for this class was generated from the following file:

|